Dubber provides a comprehensive set of APIs that enable service providers, platforms, and enterprises to embed conversation recordings, playback, compliance, and voice intelligence directly into their products and workflows. Cloud native by design and built for embedding, they offer unlimited scale and platform parity.

What our API enables

- Automate provisioning & lifecycle management at scale

- Programmatic access to recorded conversations

- Integration with enterprise and SaaS platforms

- Compliance, supervision, and analytics use cases

- Fully automated and embedded experiences

API-first architecture

Dubber is built on an API-first, cloud-native architecture supporting global scale, resilience, and continuous evolution.

Core capability areas

- Provisioning & Service Management

- Recording & Media Access

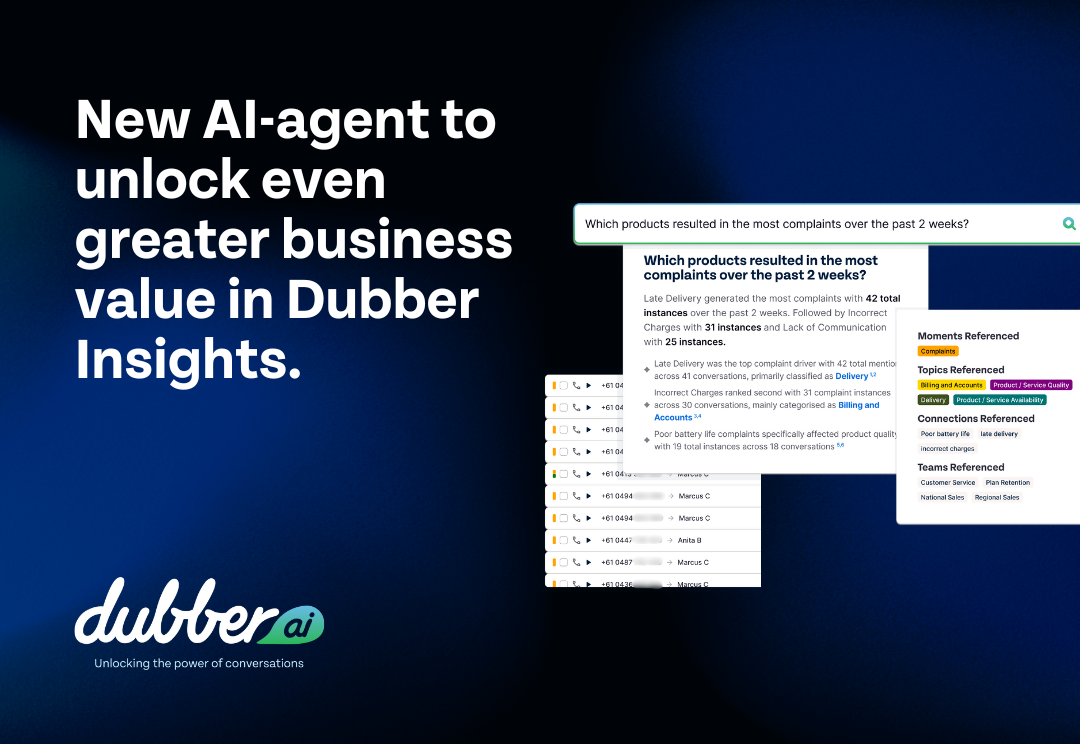

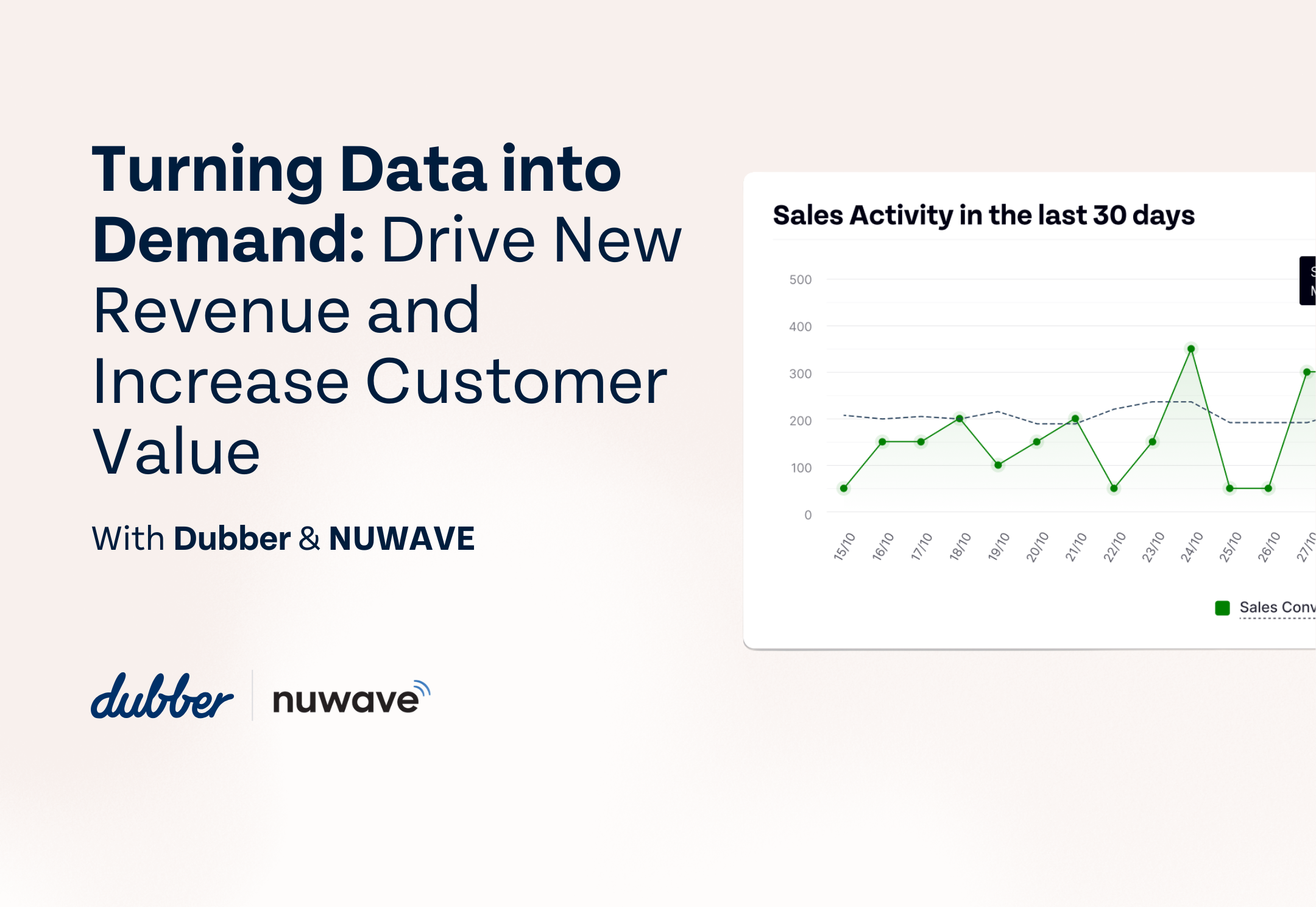

- Voice Intelligence & Insights

- Events & Workflow Automation

Typical use cases

- Embedded call recording

- CRM and compliance integrations

- AI and analytics pipelines

- Industry-specific voice data solutions

Scalability – Designed for carrier-grade and enterprise workloads with unlimited users, recordings, and regions.

Security & Access Control – Enterprise-grade authentication, authorization, tenant isolation, and API gateway protections.

Versioning & Compatibility – Versioned APIs with backward compatibility to protect customer integrations.

Developer Enablement – Self-service onboarding, sandbox environments, live documentation, and tooling.

Reliability – Production SLAs, monitoring, and high-availability architecture.

Dubber is a global cloud call recording & conversation intelligence platform trusted by service providers and enterprises worldwide. For more technical informaton, visit our developer portal or contact us today to learn more.